In this release we have updated the dashboard on our Hypernode Control Panel to display more configurable settings for your Hypernode and we have made our Hypernode configuration management more resilient against stubborn newrelic-daemon processes.

Additional Dashboard settings

During the development of our new Hypernode Control Panel which is going to replace our old legacy Service Panel we chose to go with an API-first approach. This means that our Control Panel web-application is simply a front-end for our public facing API. Everything we implement in the Control Panel you could in theory also implement yourself in your own web-application or command-line tool.

This makes it very easy for us to add certain features to the Control Panel like displaying the the values that you can configure from the command-line for your Hypernode. If you are logged in to your Hypernode with SSH you can run the hypernode-systemctl settings --help command to see which settings you can currently configure using the Hypernode API.

$ hypernode-systemctl settings --help

usage: hypernode-systemctl settings php_version 7.1

The possible values are:

enable_ioncube ['True', 'False']

password_auth ['True', 'False']

openvpn_enabled ['True', 'False']

unixodbc_enabled ['True', 'False']

supervisor_enabled ['True', 'False']

mailhog_enabled ['True', 'False']

modern_ssl_config_enabled ['True', 'False']

support_insecure_legacy_tls ['True', 'False']

modern_ssh_config_enabled ['True', 'False']

mysql_tmp_on_data_enabled ['True', 'False']

redis_persistent_instance ['True', 'False']

firewall_block_ftp_enabled ['True', 'False']

disable_optimizer_switch ['True', 'False']

mysql_disable_stopwords ['True', 'False']

mysql_enable_large_thread_stack ['True', 'False']

mysql_enable_explicit_defaults_for_timestamp ['True', 'False']

rabbitmq_enabled ['True', 'False']

elasticsearch_enabled ['True', 'False']

elasticsearch_version ['5.2', '6.x', '7.x']

varnish_esi_ignore_https ['True', 'False']

varnish_large_thread_pool_stack ['True', 'False']

varnish_enabled ['True', 'False']

blackfire_enabled ['True', 'False']

permissive_memory_management ['True', 'False']

varnish_secret string

varnish_version ['4.0']

mysql_ft_min_word_len ['4', '2']

php_version ['5.6', '7.0', '7.1', '7.2', '7.3', '7.4', '8.0']

mysql_version ['5.6', '5.7', '8.0']

blackfire_server_id string

blackfire_server_token string

override_sendmail_return_path string

php_apcu_enabled ['True', 'False']

php_amqp_enabled ['True', 'False']

managed_vhosts_enabled ['True', 'False']

nodejs_version ['6', '10']

new_relic_enabled ['True', 'False']

new_relic_app_name string

new_relic_secret string

datadog_enabled ['True', 'False']

datadog_apikey string

datadog_region string

positional arguments:

{enable_ioncube,password_auth,openvpn_enabled,unixodbc_enabled,supervisor_enabled,mailhog_enabled,modern_ssl_config_enabled,support_insecure_legacy_tls,modern_ssh_config_enabled,mysql_tmp_on_data_enabled,redis_persistent_instance,firewall_block_ftp_enabled,disable_optimizer_switch,mysql_disable_stopwords,mysql_enable_large_thread_stack,mysql_enable_explicit_defaults_for_timestamp,rabbitmq_enabled,elasticsearch_enabled,elasticsearch_version,varnish_esi_ignore_https,varnish_large_thread_pool_stack,varnish_enabled,blackfire_enabled,permissive_memory_management,varnish_secret,varnish_version,mysql_ft_min_word_len,php_version,mysql_version,blackfire_server_id,blackfire_server_token,override_sendmail_return_path,php_apcu_enabled,php_amqp_enabled,managed_vhosts_enabled,nodejs_version,new_relic_enabled,new_relic_app_name,new_relic_secret,datadog_enabled,datadog_apikey,datadog_region}

positional_value

optional arguments:

-h, --help show this help message and exit

--value DEPRECATED_VALUE

This option is deprecated. Use the positional value

insteadWe update this list of settings automatically when we add new functionality to the API. For example, the opt-in php_amqp_enabled module is a configurable that we added recently to facilitate the software that some of our users run.

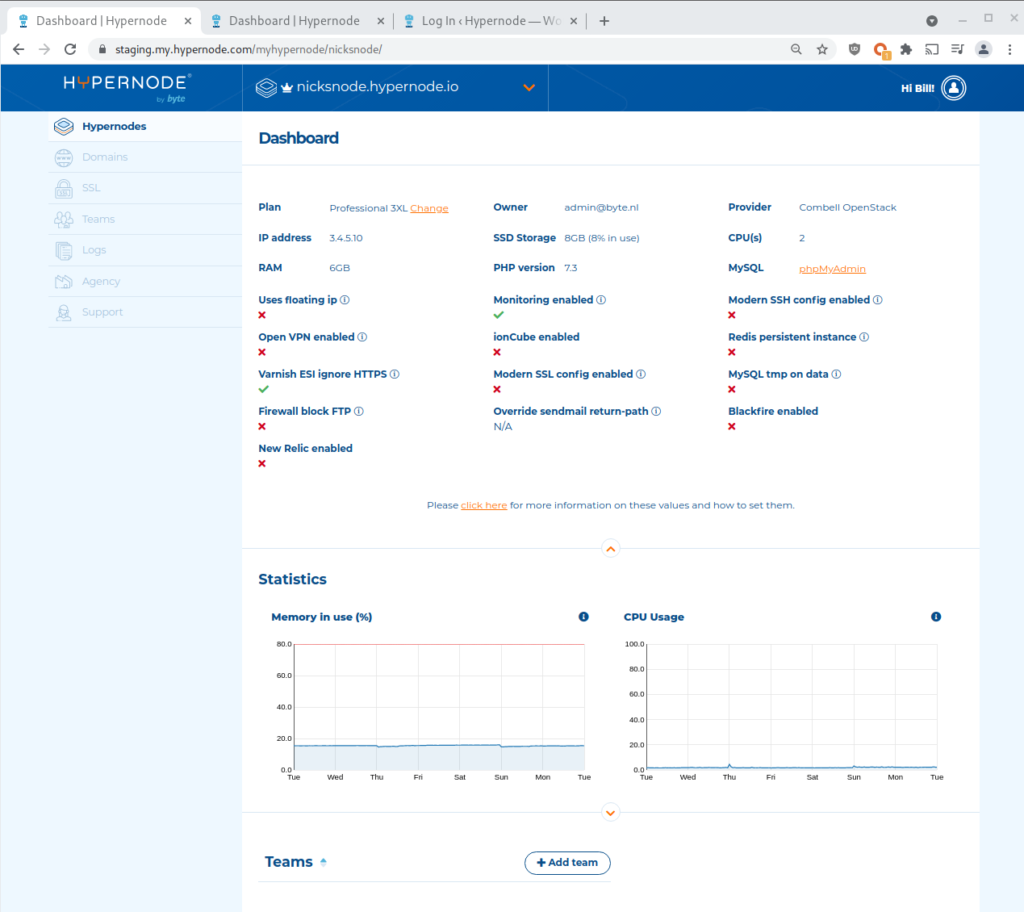

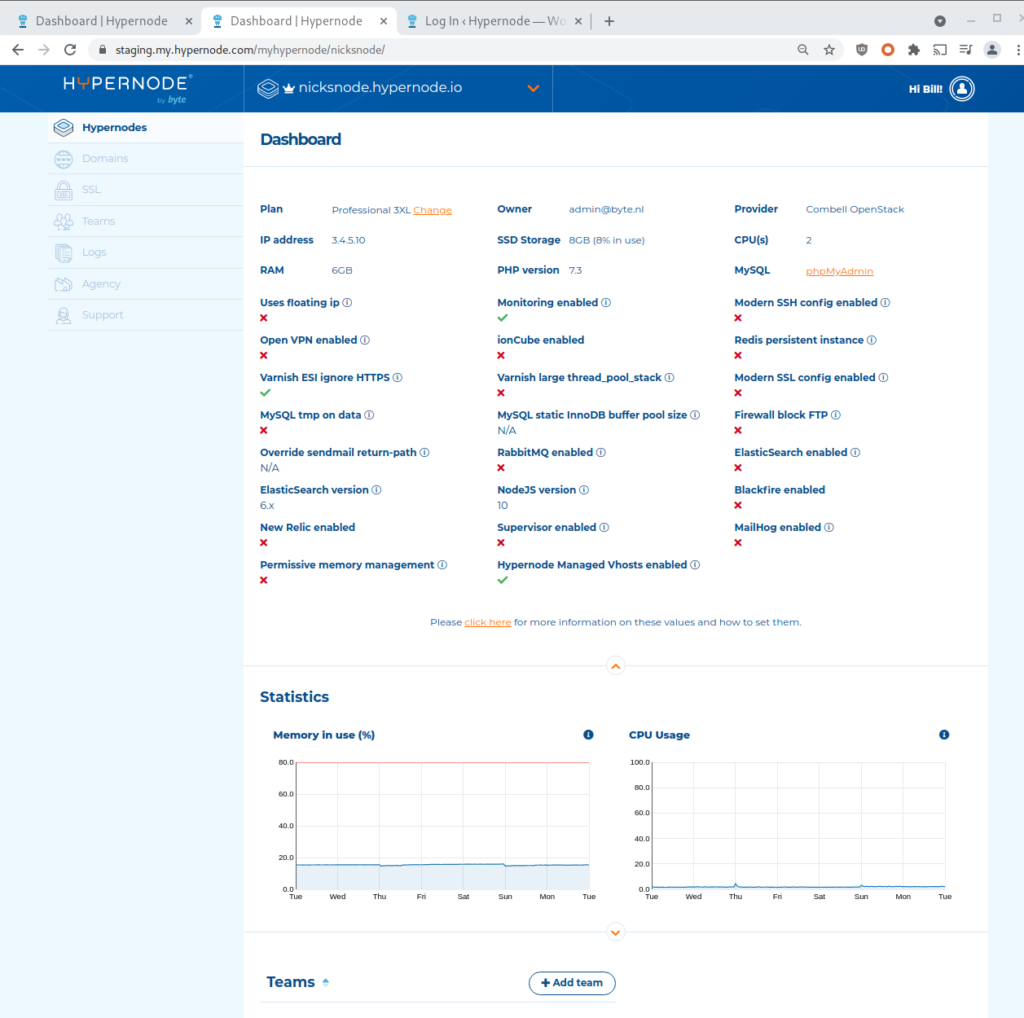

In the Control Panel on the dashboard page there’s a display of some of these values to give you a quick insight into what’s configured for this Hypernode. In this change we’ve selected a couple more values that we wanted to display on this page as well.

Because not all settings are as relevant as others there are some values that we’ve kept out, and there are also some values in there which can not be configured using the API as a user but can be configured in consultation with our Support department like a static InnoDB buffer pool size for MySQL.

Before:

After:

This update to the Control Panel will go live this week.

Dealing with stubborn newrelic-daemons

On the Hypernode platform we’re very particular about deploying updates to the system. Because stability and predictability is so important for E-commerce we are very careful about making changes and we strive to have as much control over the entire stack as we can. We are very diligent in the way we deal with software configurations and we do things like host our own operating system repositories and package a lot of our own software to ensure the chance of things entering our ecosystem without notice and without being properly tested is slim.

While we go far in trying to control all facets of the hosting environment, there are still factors of uncertainty that we can’t anticipate for. There’s users using the Hypernodes in ways that we could not have predicated (people are very creative!) and at the end of the day software remains software and things sometimes behave in a manner you did not foresee.

For that reason we have this development practice where for any expected functionality of the system we write a unit test. On Hypernode we call these the ‘nodetests’ and they can tell us immediately if something unexpected is going on on the server, as long as it happens in the bounds of things we have explicitly configured.

Every change that we do to the server we do with an Ansible task in an Ansible playbook. We write those tasks in an idempotent manner, meaning that it’s not just a script that runs once and will break if you run it again. The playbooks are meant to be ran multiple times and the eventual state should be the same (it ‘ensures’ the state). This is exactly what our automation is doing when you see update_node in your hypernode-log output.

$ hypernode-log | grep update

update_node 2021-06-29T07:50:09Z 2021-06-29T07:50:10Z running 2/3 full_update_node_to_update_flowTo make sure the state of each Hypernode is as we expect it to be we write a Python unit test for each Ansible Task to see if it did what we expected it to do. If any of those tests fail it means the system is in an unexpected state. These nodetests failing is exactly what you see when the output of hypernode-log displays ‘reverted’.

$ hypernode-log | grep update

update_node 2021-06-28T08:11:02Z 2021-06-28T08:16:08Z success 3/3 finished

update_alerting 2021-06-28T08:10:59Z 2021-06-28T08:11:27Z success 2/2 finished

update_node 2021-06-28T07:30:03Z 2021-06-28T07:37:34Z reverted 1/3 finished

update_node 2021-06-28T07:26:10Z 2021-06-28T07:31:59Z reverted 1/3 finished

Generally this is no big deal and no reason for alarm because the automation will fix it with a subsequent configuration management update, or if it’s a real issue one of our support engineers will likely take note of it and fix the problem.

But sometimes we find software behaving in a way that is unexpected and we have to go debug and find out exactly why things aren’t going as we expected them to. This is exactly what happened this week with the newrelic-daemon. On Hypernode you can enable and disable New Relic. When you enable New Relic it starts a service that can help you monitor and debug performance Bottlenecks in your application.

We noticed that it sometimes happened that when New Relic was first enabled on a Hypernode and then later disabled, the newrelic-daemon process was still running even though the systemd service had been stopped. Further investigation showed that it was possible for the newrelic-daemon process to go into a state where it was not responsive to a SIGTERM signal but it would only be stopped by a SIGKILL (kill -9).

In this release we have updated our configuration management to ensure New Relic is forcefully killed if it should be stopped because it was disabled but the process won’t be terminated by a graceful signal.