This release brings a new version of hypernode-importer3, some bugfixes for hypernode-parse-nginx-log and various changes to our cloud operations job processing backend.

Magento path discovery in the importer is now breadth first

The hypernode-importer3 tool will now find the top level Magento in the specified path. If a path was supplied that contained a Magento installation which contained another Magento installation, the Magento installation that was found was dependent on the find on the remote server and the order in which it listed the files.

The order that files are listed by find is defined by how the virtual filesystem supplies the files. That order can vary per filesystem and depending on the filesystem it might be based on the order of directory creation or some other factor.

For example in a situation with a nested Magento installation named public in /data/web/public:

$ rsync -av /data/web/public /data/web/public/publicImporting that shop from another Hypernode with

hypernode-importer3 --path /data/web/publiccould work if public happened to be listed after app.

$ find public -name local.xml | grep etc

public/app/etc/local.xml # <- this one would be found

public/public/public/app/etc/local.xml

# Importing from another Hypernode

$ hypernode-importer3 --host vdloo.hypernode.io --user app --path /data/web/public --dry-run |& grep Found

Found Magento 1 installation in /data/web/public/!But if the nested directory happend to appear higher in the tree then an unexpected shop might be found.

$ find public -name local.xml | grep etc

public/public/public/app/etc/local.xml # <- this one would be found

public/app/etc/local.xml

# Importing from another Hypernode

$ hypernode-importer3 --host bonoboimperium.hypernode.io --user app --path /data/web/ --dry-run |& grep Found

Found Magento 1 installation in /data/web/public/public/public/!This update adds an additional sort to the file listing so it will be deterministic across different servers and no longer dependent on the underlying filesystem or find implementation.

$ find public -name local.xml | grep etc

$ hypernode-importer3 --host bonoboimperium.hypernode.io --user app --path /data/web/ --dry-run |& grep Found

Found Magento 1 installation in /data/web/public/!If you do want to import the nested shop you can do that by specifying a path closer to the local.xml or env.php of the shop.

$ hypernode-importer3 --host bonoboimperium.hypernode.io --user app --path /data/web/public --dry-run |& grep Found

Found Magento 1 installation in /data/web/public/!

app@j6yrvz-tim-magweb-do:~$ hypernode-importer3 --host bonoboimperium.hypernode.io --user app --path /data/web/public/1234backup --dry-run |& grep Found

Found Magento 1 installation in /data/web/public/1234backup/public/!The importer will now check if the remote site will fit on the Hypernode you are importing on

The importer will check if the site on the remote host will fit on the disk the /data partition is on. If it can not check the remote size, it will assume it will fit and try the import anyway. Because the importer is often used to re-import the same shop, we do not check bytes free but total disk space instead. Note that this will not catch a situation in which the disk is large enough but filled up by data other than the shop itself.

$ hypernode-importer3 --host vdloo.hypernode.io --path /data/web/public --verbose --set-default-url

...

Executing Magento 1 import strategy

Aborted: The disk size on the hypernode is lower than the size of the magento installation.New set maintenance on source flag for hypernode-importer3

This flag was not implemented yet in the new importer. It now has and it works the same as the flag in the old Python 2 version of the importer.

$ hypernode-importer3 --help

..

--set-maintenance-on-source

Set the original shop in maintenance mode. You

probably want this if you are moving the original shop

instead of only copying it.

Updated hypernode-parse-nginx-log (pnl)

This release also includes a new hypernode-parse-nginx-log, this is a tool that can be used to parse information about requests to your webserver. This updated version can handle dubious input better and won’t crash if the logs it is parsing contains control characters or the like.

Also, we’ve added the new --days-ago and --date flags.

$ pnl --help

..

--days-ago DAYS_AGO Analyze logs and outputs for a specific number of days

ago

--date DATE Analyze logs and outputs for a specific date

Various changes to our job processing backend

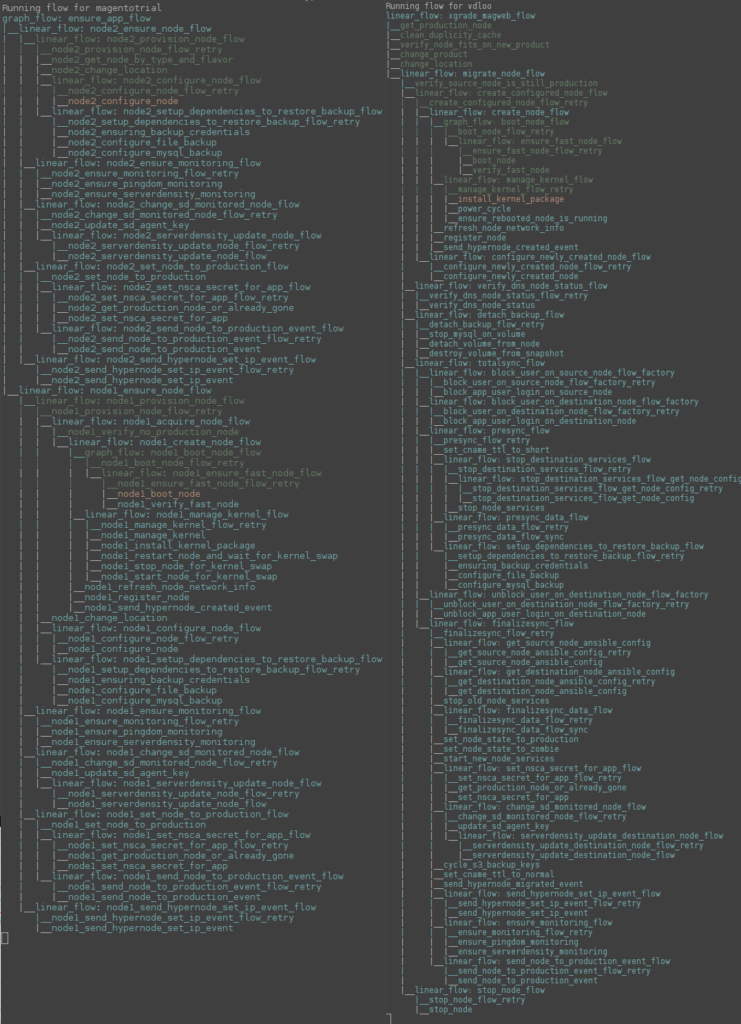

We are in the process of preparing our backend to support Hypernode clusters. We are in very early stages of development and currently we are working on adapting our data model to support multiple nodes per account. To manage the complexity of provisioning multiple servers simultaneously we have developed some internal tooling to visualize processes on our job board in real time.

To give you a sneak peak into what development of cluster automation for Hypernode looks like behind the scenes, this is a screenshot is an example of what the process might look like for spawning a cluster (left) and performing a regular Hypernode upgrade (right). In the coming months we will be adapting all our processes to work for Hypernodes that consist of more than one node.